Responsible AI

Mitigate AI risks with tools

for Responsible AI

Organizations use Verta to achieve AI transparency, fairness, privacy respect, robustness, and accountability, ensuring outputs are unbiased and actions are traceable for clear, explainable AI decisions.

What Verta can solve

Responsible AI demands that AI is transparent, fair, and privacy-conscious, while embodying the core characteristics of ethical AI usage.

Amid rising concerns over AI ethics, organizations must adopt Responsible AI practices, emphasizing fairness, transparency, accountability, privacy, and safety to address these challenges head-on.

Govern all your ML assets in a single, secure catalog that ensures transparency and safety

Publish all model metadata, documentation and artifacts to a central, searchable catalog

Give non-technical stakeholders like Risk Management, Governance and Legal full visibility to your complete AI portfolio

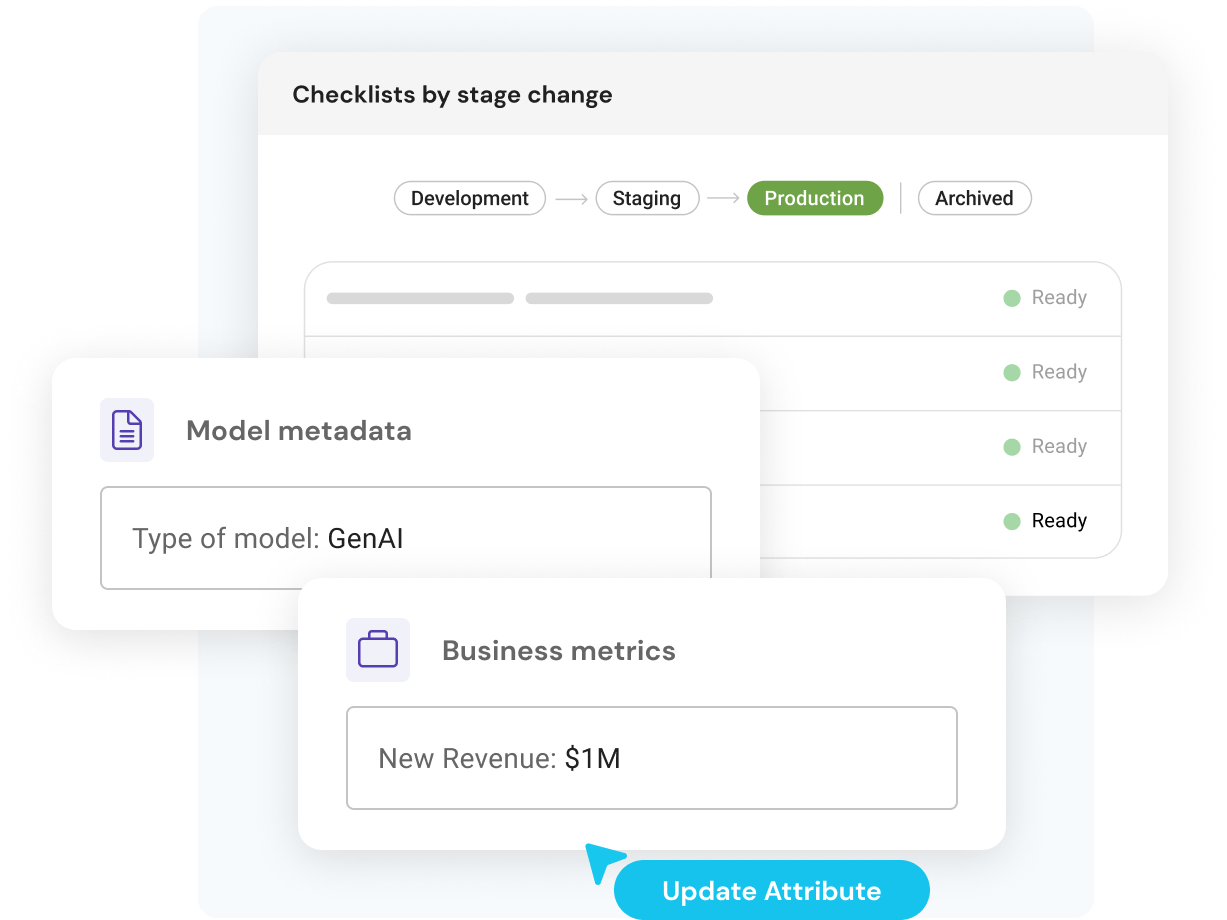

Tag and track model assets for attributes related to Responsible AI such as PII, EU AI Act risk level, generative AI or copyrighted content

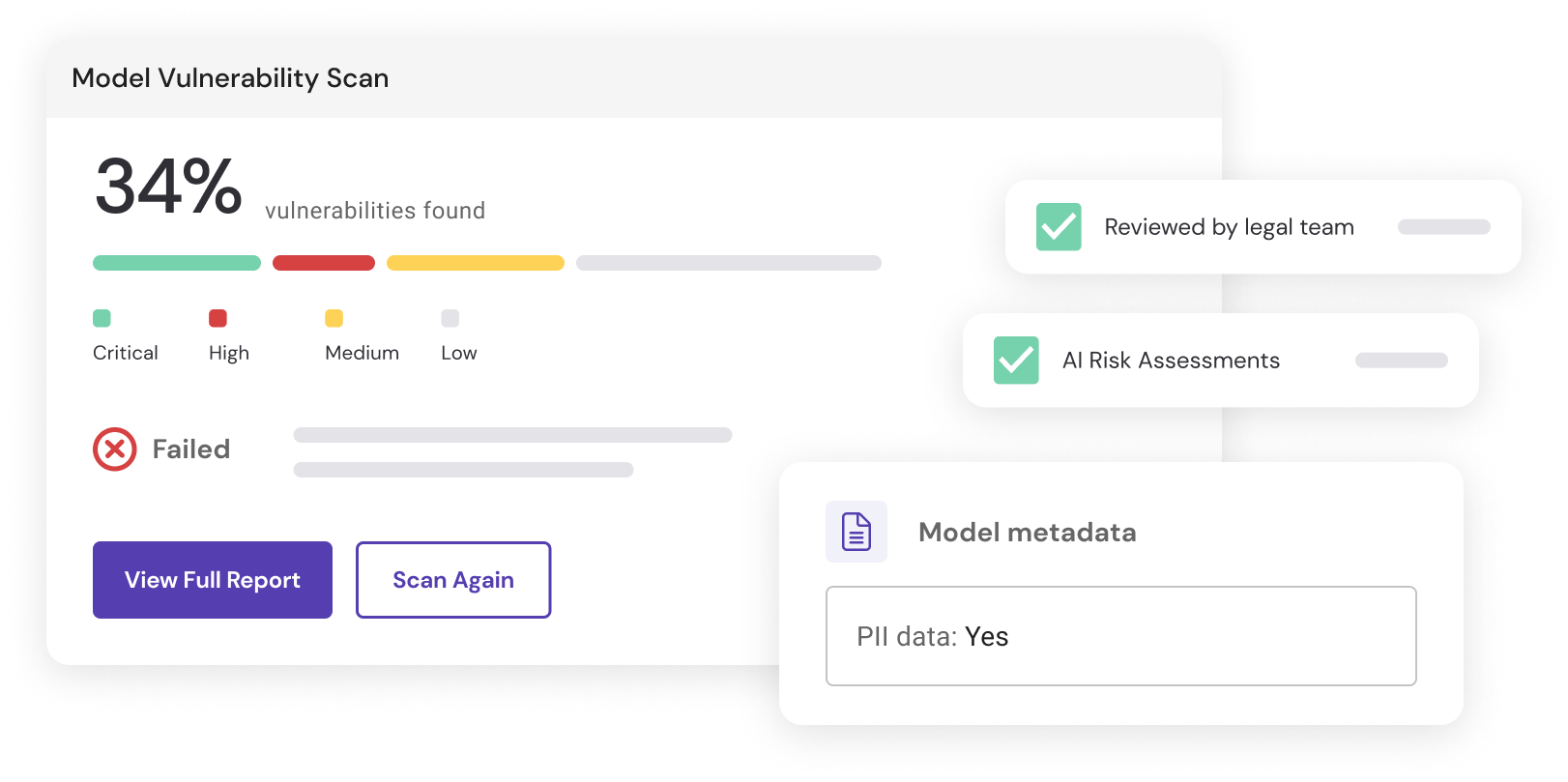

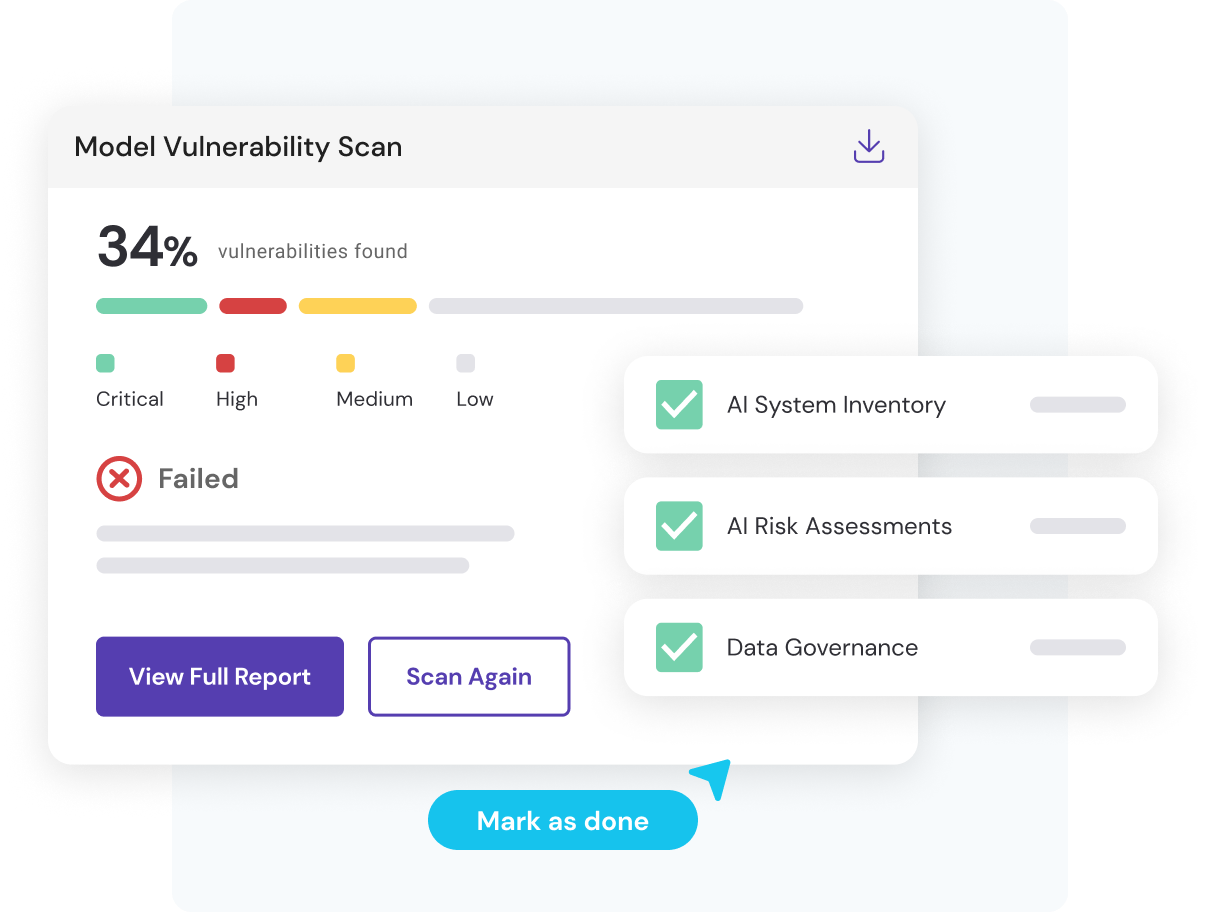

Ensure models adhere to Responsible AI principles before deployment and during production

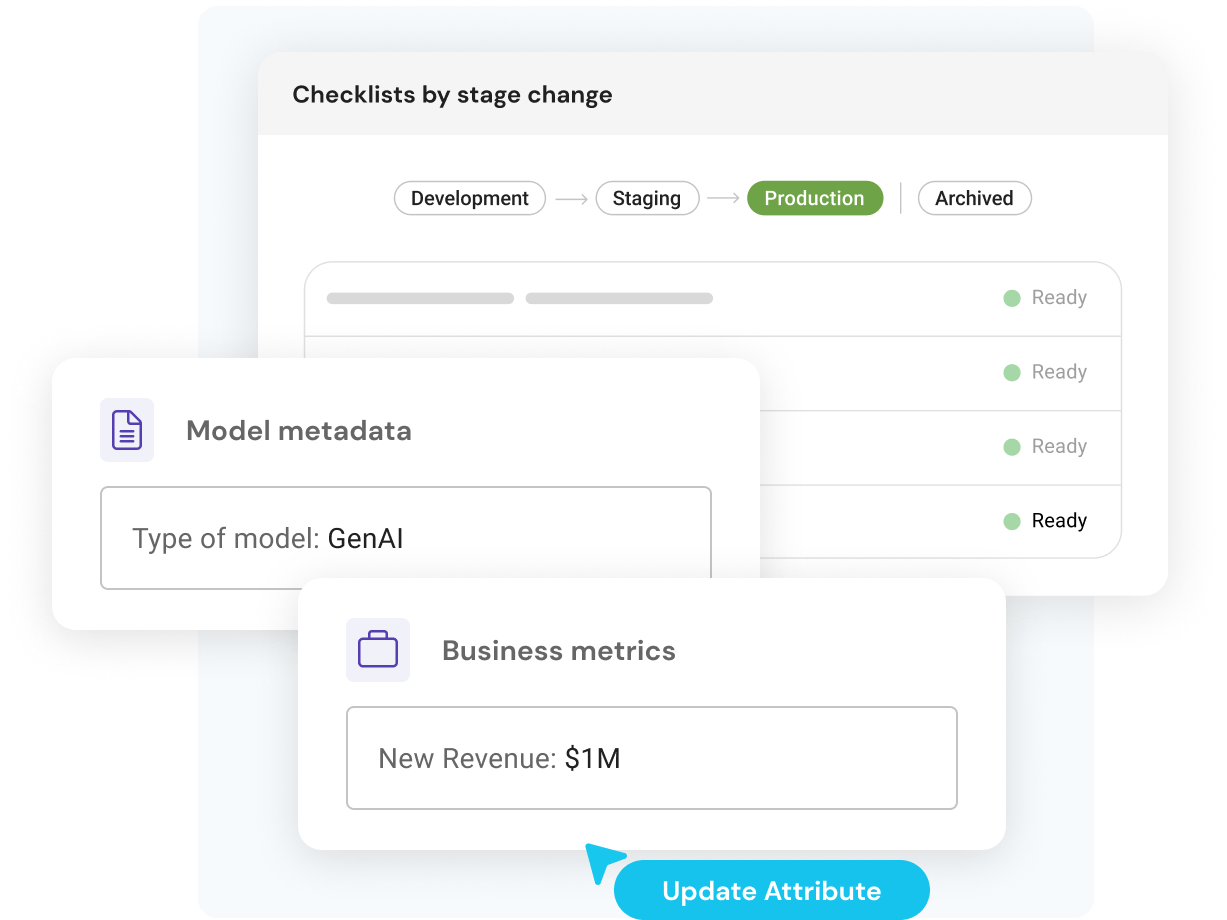

Configure standardized governance checklists to ensure models are fully compliant before release

Validate that models pass essential process gates such as legal review, explainable AI validation, and assessments for ethics, bias and fairness

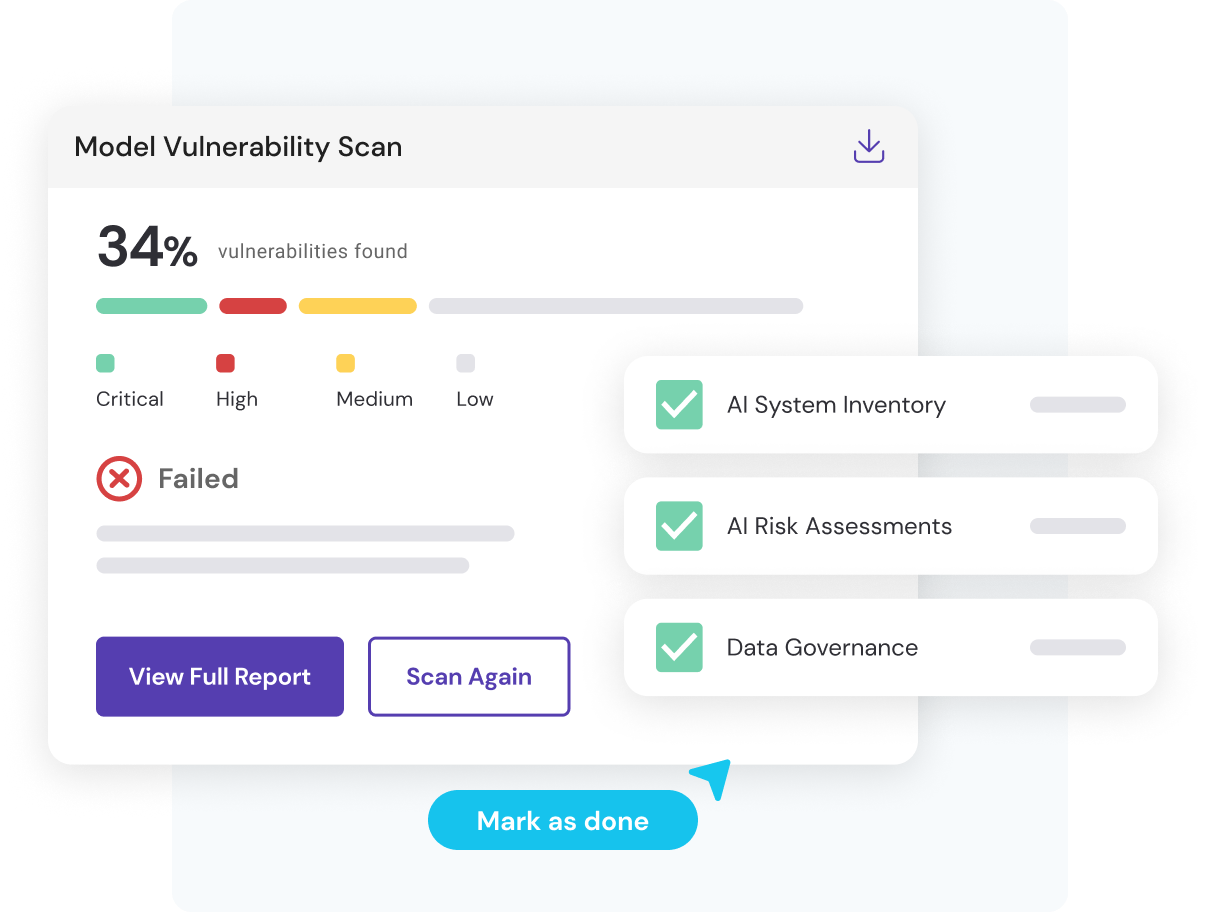

Scan models for vulnerabilities, and easily review training data for bias

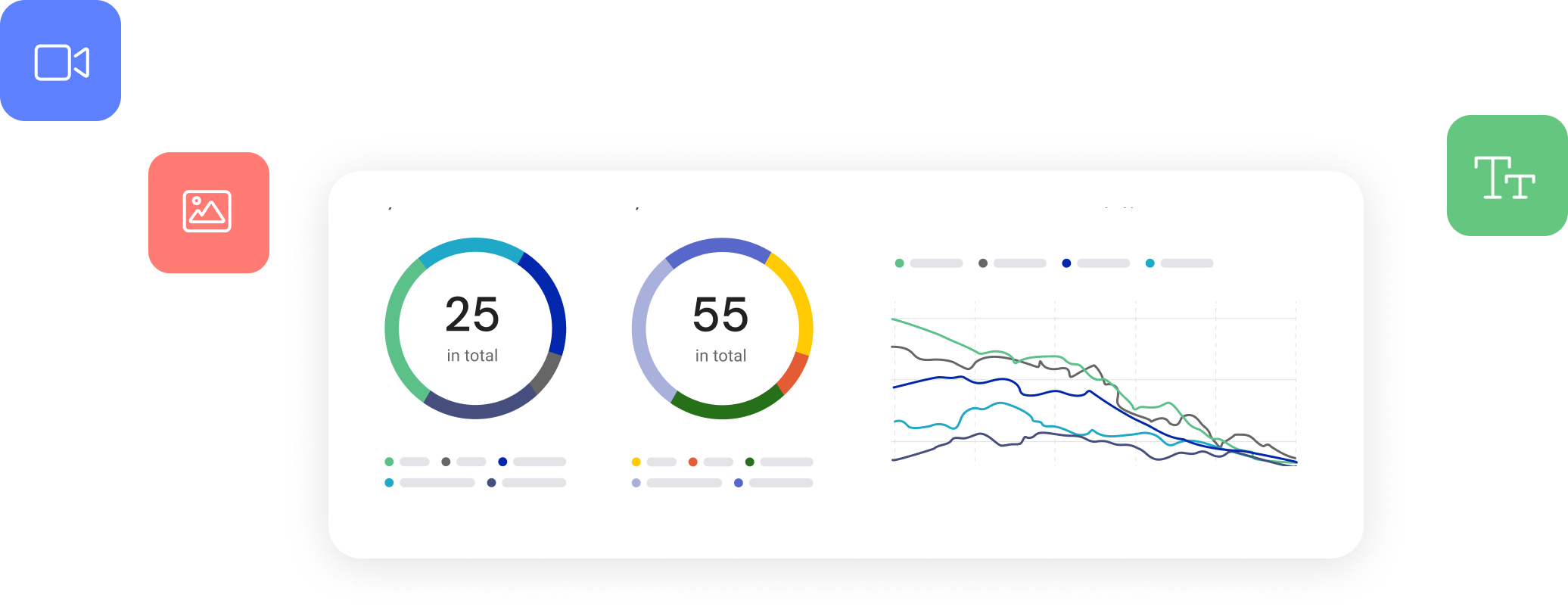

Track input, output and intermediate results, with fully configurable performance monitoring for any serving infrastructure

Quickly identify issues such as drift, bias or accuracy degradation, and know when models are failing through actionable alerts

Support transparency, auditability and explainability with complete and accurate model documentation

Leverage AI Assisted Documentation copilot to create robust model documentation quickly and easily

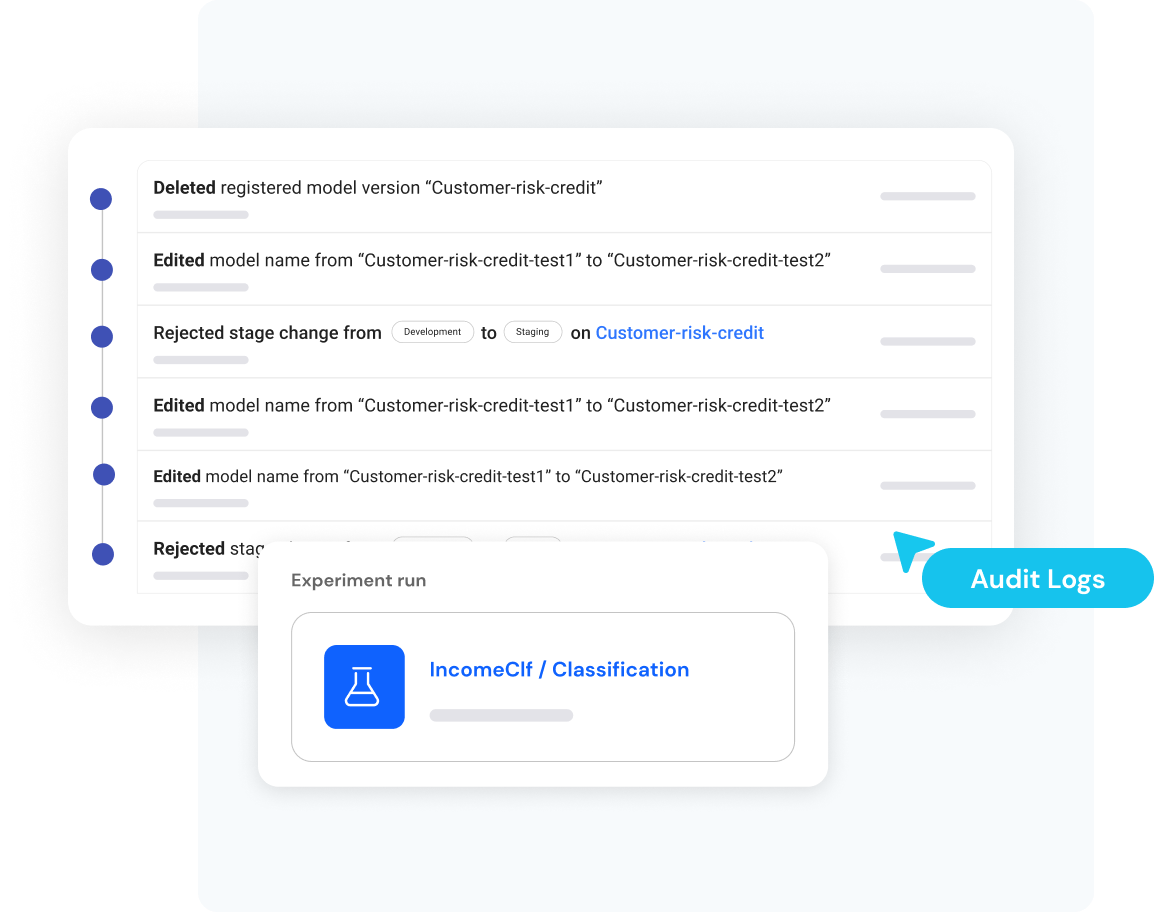

Track and visualize ML experimentation, and obtain full model reproducibility using code, data, configuration and environment variables

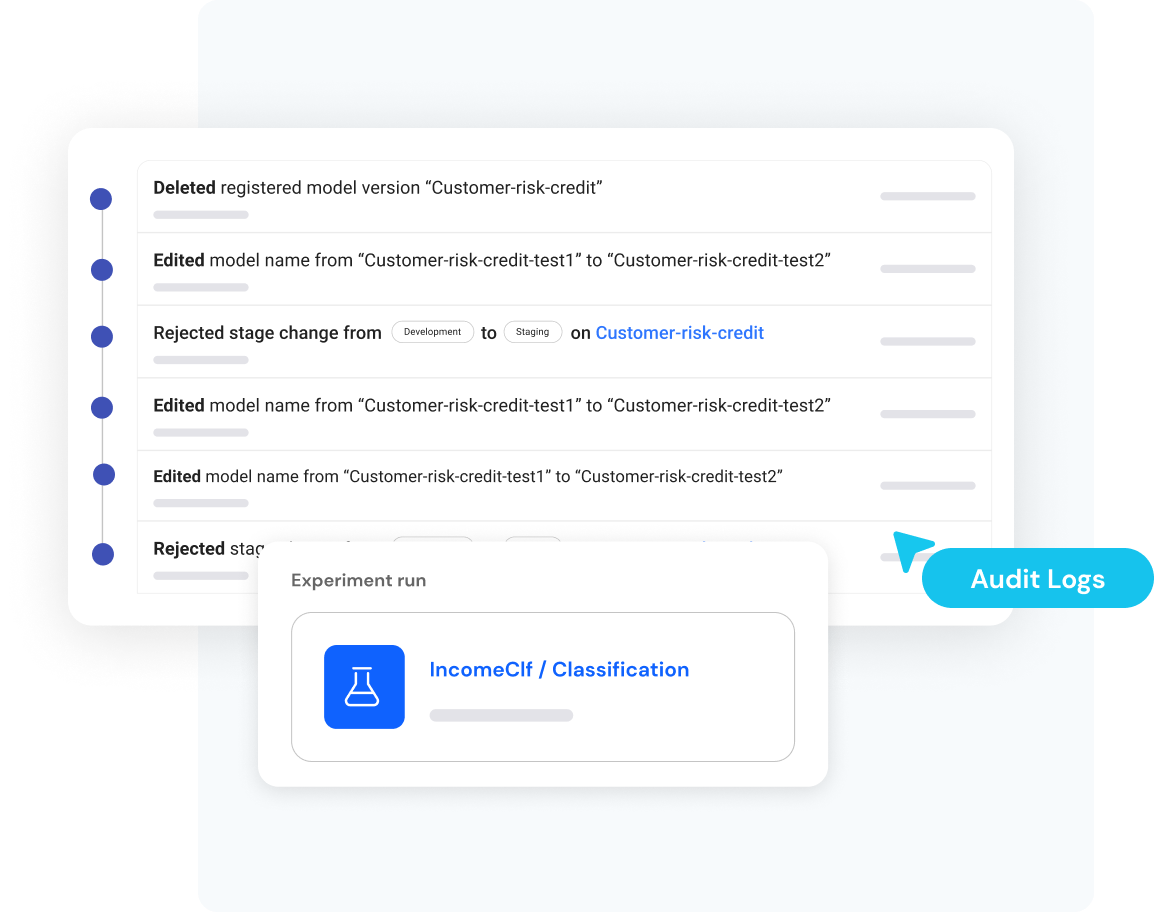

Access detailed audit logs to demonstrate compliance with regulatory requirements