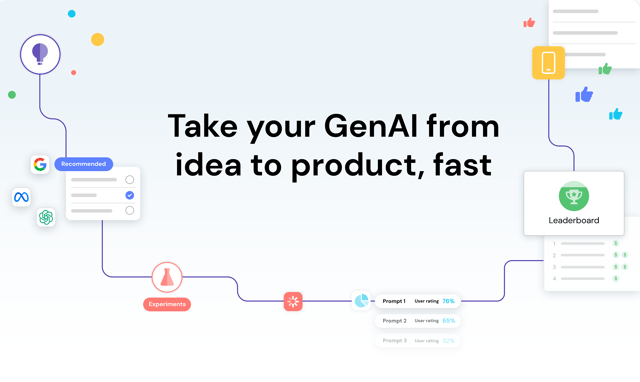

The Verta GenAI Workbench is your one-stop shop to bring your Generative AI project from idea to launch. Go from prototyping with GPT through producing on-brand LLM outputs by gathering human feedback and deploying the resulting GenAI app — all in a single platform.

The Workbench rapidly accelerates the process of building with LLMs for creators of all technical levels. Simply describe your use case and provide some examples, and the platform will recommend relevant models and high-quality prompts tailored for your needs. Run automated and human-in-the-loop evaluations to rank combinations on a Leadboard. Then deploy your best performing configuration as an applet, all from the Workbench. Your Leaderboard continues to update as user feedback flows in, driving continuous iteration and improvement.

Why did we build the GenAI Workbench?

Over the past few years our team has been developing foundational technology for model management and operations. Starting with the launch of ModelDB, a pioneering model versioning system, Verta has evolved into an automated platform for scaling model delivery, monitoring, and governance for high-throughput applications, powering systems ranging from underwriting at a Top-5 insurer to fraud detection at global stock exchanges.

The GenAI Workbench builds on top of these innovations and solves a critical problem for Gen AI builders: the difficulty of crossing the chasm between the initial hype of Generative AI and achieving tangible productivity and success with LLMs.

Over the past several months, we've worked closely with our customers and partners on GenAI POCs and developed our own Generative AI applications, learning a great deal. Along the way, we’ve noted the following challenges:

- Versioning & management: As more intelligent apps, assistants, and agents are built, the need for core operations like model management, configuration management (prompt/instructions management), and dataset management becomes more crucial than ever before. We need to be able to answer questions like “What version of GPT and prompt/instructions produced hallucinations regarding presidential candidates?”

- Rapid experimentation: The landscape of GenAI models continues to evolve, and so does the set of tools, techniques, and best practices for building GenAI apps. As a result, GenAI builders need effective ways to experiment and choose from the combinatorial explosion of models, prompts/instructions, pipelines, and data formats to find the best one for their needs.

- Efficient operations: GPT-3 and now GPT-4 set the bar in terms of performance on a variety of tasks. But these GPTs are expensive, both in terms of dollars and compute (for that matter, so is Llama 2). The ability to serve GenAI models more efficiently and choose appropriately-sized models is crucial to make GenAI applications cost-effective.

- Simplicity: The people building GenAI and wanting to leverage GenAI are not data scientists; sometimes, they are not even coders. Additionally, GenAI applications often require expertise from non-technical professionals (e.g., marketing assistants or customer success managers). If the tools for building with LLMs are not accessible to everyone, regardless of technical ability, the pace of innovation—and the quality of GenAI apps—will suffer as a result.

- Evaluation & Feedback: Finally, GenAI is useful only if it produces high-quality results for the specific application. LLMs are getting better at assessing model outputs, but human feedback, particularly SME feedback, is critical. We need much better ways to incorporate this feedback to guarantee that our GenAI apps work as intended.

The newest addition to the Verta platform, the Verta GenAI Workbench, was built to solve these challenges. It is the result of our team’s expertise in versioning, management, and serving, our hands-on knowledge of what it takes to build successful LLM apps, and our strategic bet of bringing on data annotation veterans.

The Generative AI revolution is happening now, and teams can’t afford delays or roadblocks. With the Workbench, the time for launching an LLM application is cut down from months to days or even hours, and quality control is built in from end to end.

Learn more

For an in-depth look at our capabilities, I invite you to read our full launch blog post and check out the platform here. In the coming weeks, we will open up direct API access to our system, including model serving, management, and feedback APIs.

Reach out to us for early access!

Subscribe To Our Blog

Get the latest from Verta delivered directly to you email.